Investing in Poetiq, an alternative scaling law for intelligence

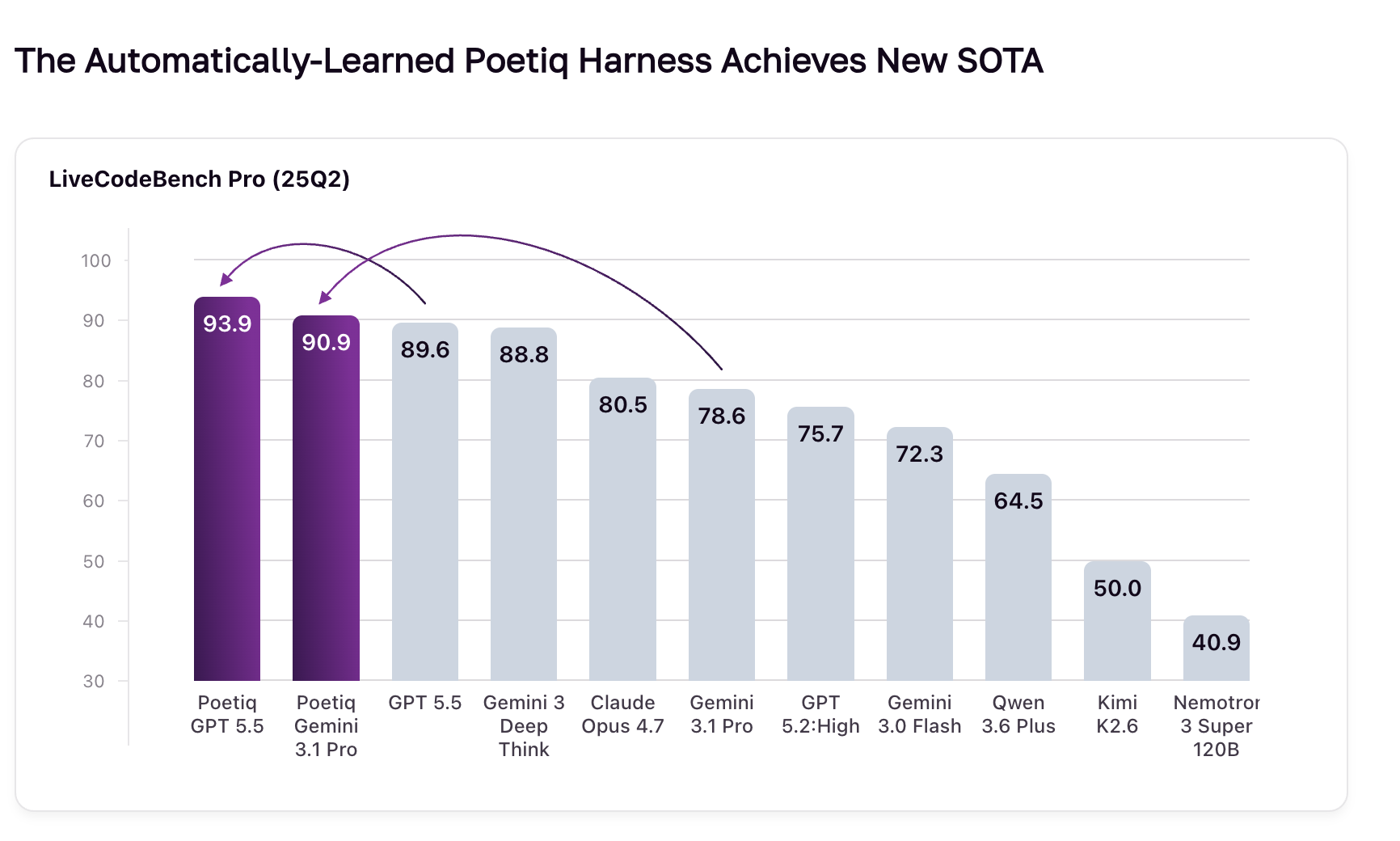

We're excited to announce our investment in Poetiq. Just today, they released results on LiveCodeBench Pro showing their harness lifted Gemini 3.1 Pro past Google's own flagship reasoning product, Deep Think. Apply the same harness to GPT 5.5 and it climbs to 93.3%, a new state of the art.

No fine-tuning. No privileged model access. No hand-built pipelines.

Today, most people build harnesses (code + prompts + structure) by hand with tools like Claude Code. Doing so is real engineering.

Poetiq's contribution: a meta-system that automates the entire process. You give it a hard task; it gives you a harness that wraps any base model and outperforms it. When the next frontier model ships, the same harness still works on top of it. You don't lose your investment.

Poetiq is led by founders Ian Fischer and Shumeet Baluja, longtime Googlers and DeepMind researchers with 50+ combined years building at the frontier.

Poetiq is 7 people. Their last benchmark cost under $100K to optimize. The frontier labs they beat spend hundreds of millions on each training run.

The standard way to improve AI is to train a new model from scratch. It costs hundreds of millions of dollars and takes months. Poetiq's thesis is that you can also improve intelligence much more cheaply and quickly by recursively optimizing how existing models reason. The two work together, not against each other.

This is the holy grail of AI: recursive self-improvement. AI that makes itself smarter.

The metaphor Ian uses is stilts. Whatever the tallest model is, you stand on top of it and you're taller. Frontier labs are the ground, not the competition.

Huge congrats to Ian Fischer, Shumeet Baluja, and the whole Poetiq team.

PS they're hiring AI scientists and researchers. Read more on their careers page here.